🧍♂️ Biography

I received my B.S. degree in 2023 from the Department of Statistics and Information Science at Fu Jen Catholic University (FJCU), Taiwan, where I was advised by Prof. Hao-Chiang Shao. I completed my M.S. degree in 2025 at National Cheng Kung University (NCKU), where I conducted research at the Advanced Computer Vision Laboratory (ACVLAB) under the mentorship of Prof. Chih-Chung Hsu and the Computational Photography Laboratory (CPLAB) at National Yang Ming Chiao Tung University (NYCU), working with Prof. Yu-Lun Liu. I will be pursuing my Ph.D. at the University at Albany, State University of New York (SUNY Albany), where I will conduct research at the Computer Vision and Machine Learning Laboratory (CVML LAB) under the co-supervision of Prof. Ming-Ching Chang and Prof. Xin Li.

Find my resume here (last updated Jan 17, 2026).

My research interests include, but are not limited to, Low-level Vision Problems, Computational Photography, Hyperspectral Image Processing, Multimedia Analysis and Security, Efficient AI and Model Acceleration, and Medical Image Analysis.

I am always open to research collaborations. If you are interested in working together or simply want to exchange ideas, please feel free to reach out to me via email.

In my free time, I enjoy traveling ✈️, capturing moments through photography 📸, and making desserts 🍰.

📢 News & Achievements

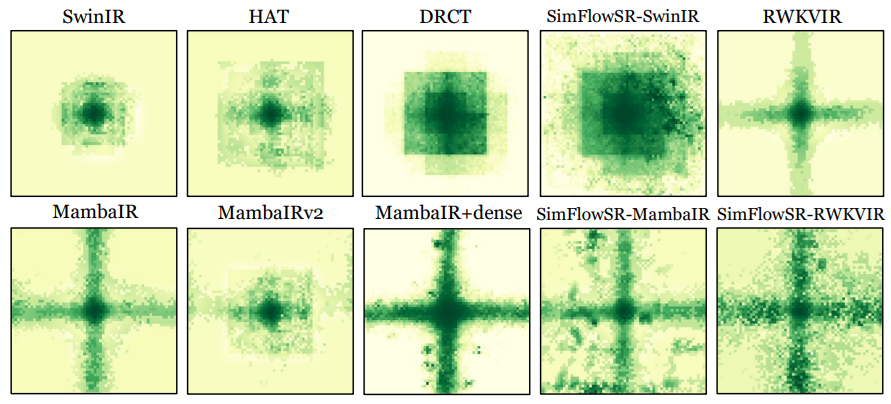

SimFlowSR: Consistent Information Flow with Self-similarity Aggregation for Single Image Super-Resolution

Chia-Ming Lee, Chih-Chung Hsu

Recovers fine image details by aggregating recurring self-similar patterns across the image while maintaining consistent information flow through the network, preventing useful texture signals from fading in deep layers.

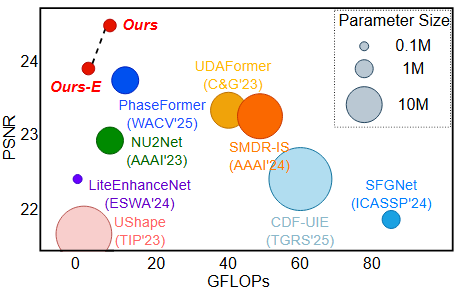

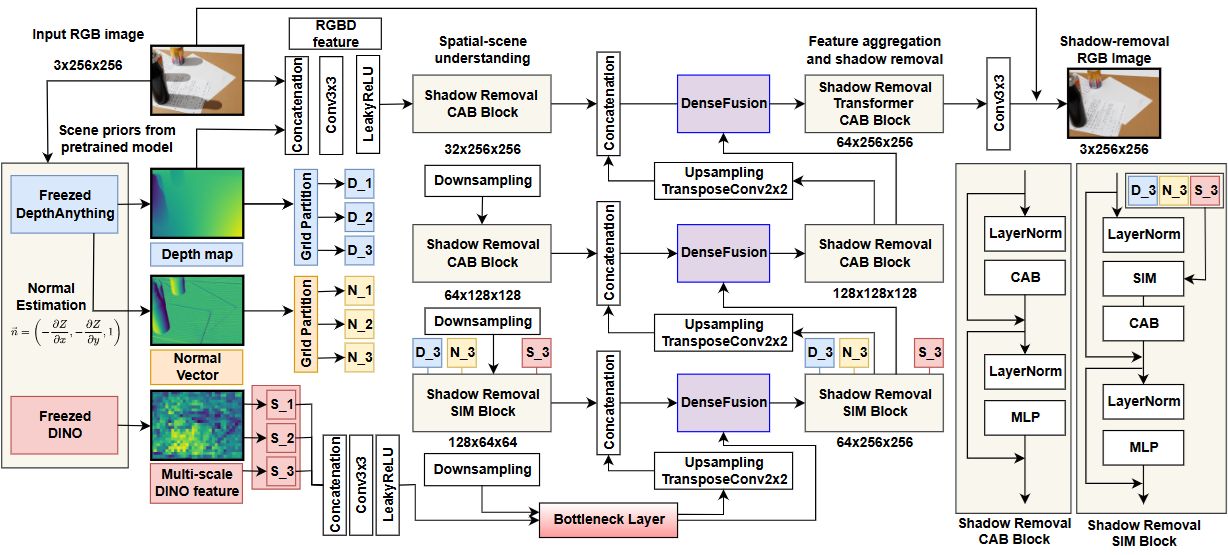

PhaSR: Generalized Image Shadow Removal with Physically Aligned Priors

Chia-Ming Lee, Yu-Fan Lin, Yu-Jou Hsiao, Jin-Hui Jiang, Yu-Lun Liu, Chih-Chung Hsu

Removes shadows from images by incorporating physical light models and geometric priors, enabling robust restoration across diverse real-world scenes and lighting conditions without retraining for each domain.

ReflexSplit: Single Image Reflection Separation via Layer Fusion–Separation

Chia-Ming Lee, Yu-Fan Lin, Jin-Hui Jiang, Yu-Jou Hsiao, Chih-Chung Hsu, Yu-Lun Liu

Separates reflected and transmitted layers in a single photo by alternating between fusing and splitting mixed features, teaching the network to disentangle overlapping visual signals that are hard to distinguish.

WWE-UIE: A Wavelet & White Balance Efficient Network for Underwater Image Enhancement

Ching-Heng Cheng, Jen-Wei Lee, Chia-Ming Lee, Chih-Chung Hsu

Enhances murky underwater photos by combining wavelet-based frequency analysis with white balance correction, efficiently restoring natural colors and recovering details lost to water scattering.

DenseSR: Image Shadow Removal as Dense Prediction

Yu-Fan Lin, Chia-Ming Lee, Chih-Chung Hsu

Reframes shadow removal as a per-pixel dense prediction task, allowing the model to jointly estimate shadow regions and restore the underlying colors in a single unified pass.

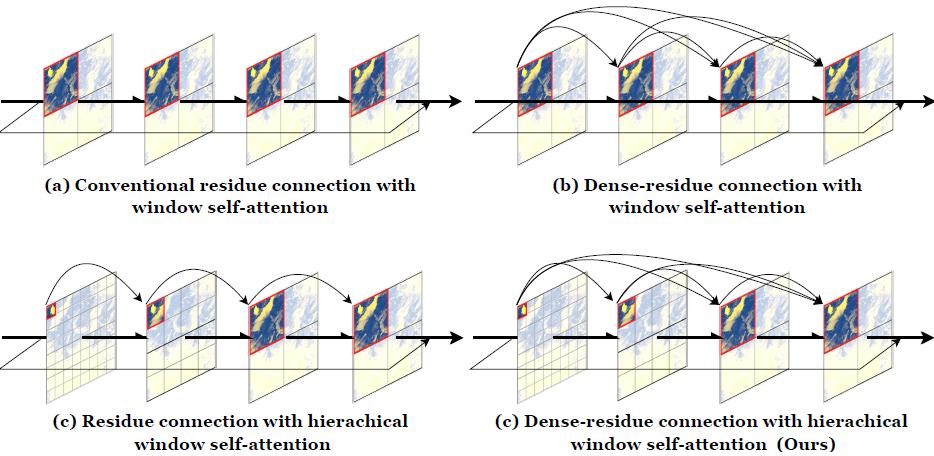

DRCT: Saving Image Super-Resolution away from Information Bottleneck

Chih-Chung Hsu, Chia-Ming Lee, Yi-Shiuan Chou

Keeps high-frequency image details alive throughout the super-resolution process by introducing dense residual connections in a transformer backbone, preventing fine textures from being discarded in deep layers.

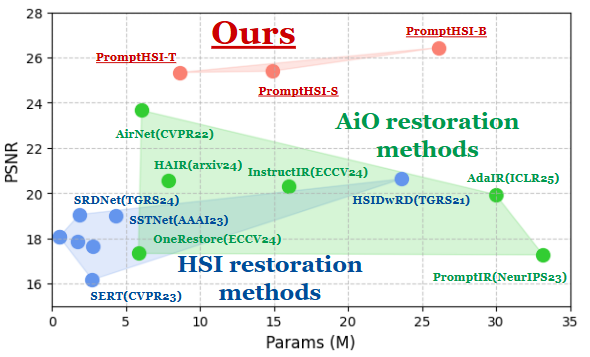

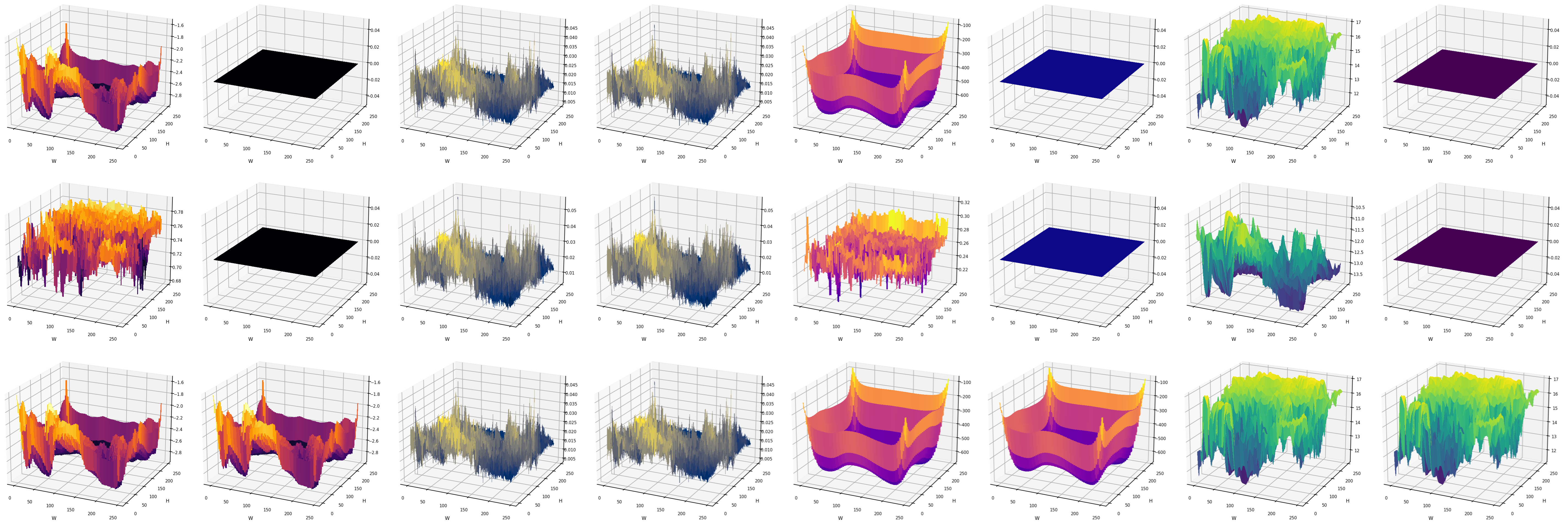

PromptHSI: Universal Hyperspectral Image Restoration with Vision-Language Modulated Frequency Adaptation

Chia-Ming Lee, Ching-Heng Cheng, Yu-Fan Lin, Yi-Ching Cheng, Wo-Ting Liao, Chih-Chung Hsu, Fu-En Yang, Yu-Chiang Frank Wang

Restores degraded hyperspectral images using text prompts to guide frequency-domain adaptation, allowing a single model to handle multiple types of noise and distortion without task-specific retraining.

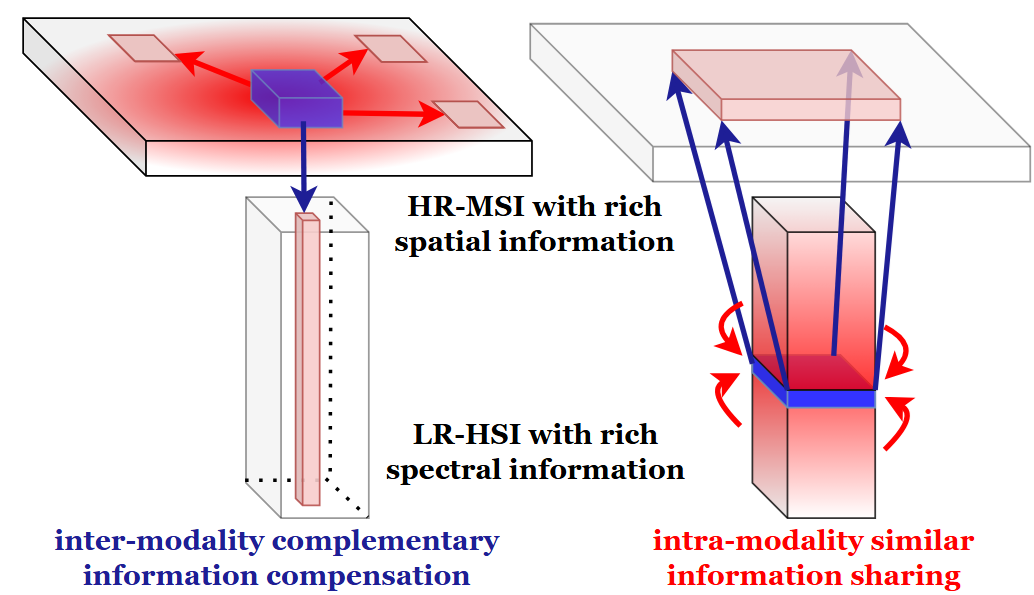

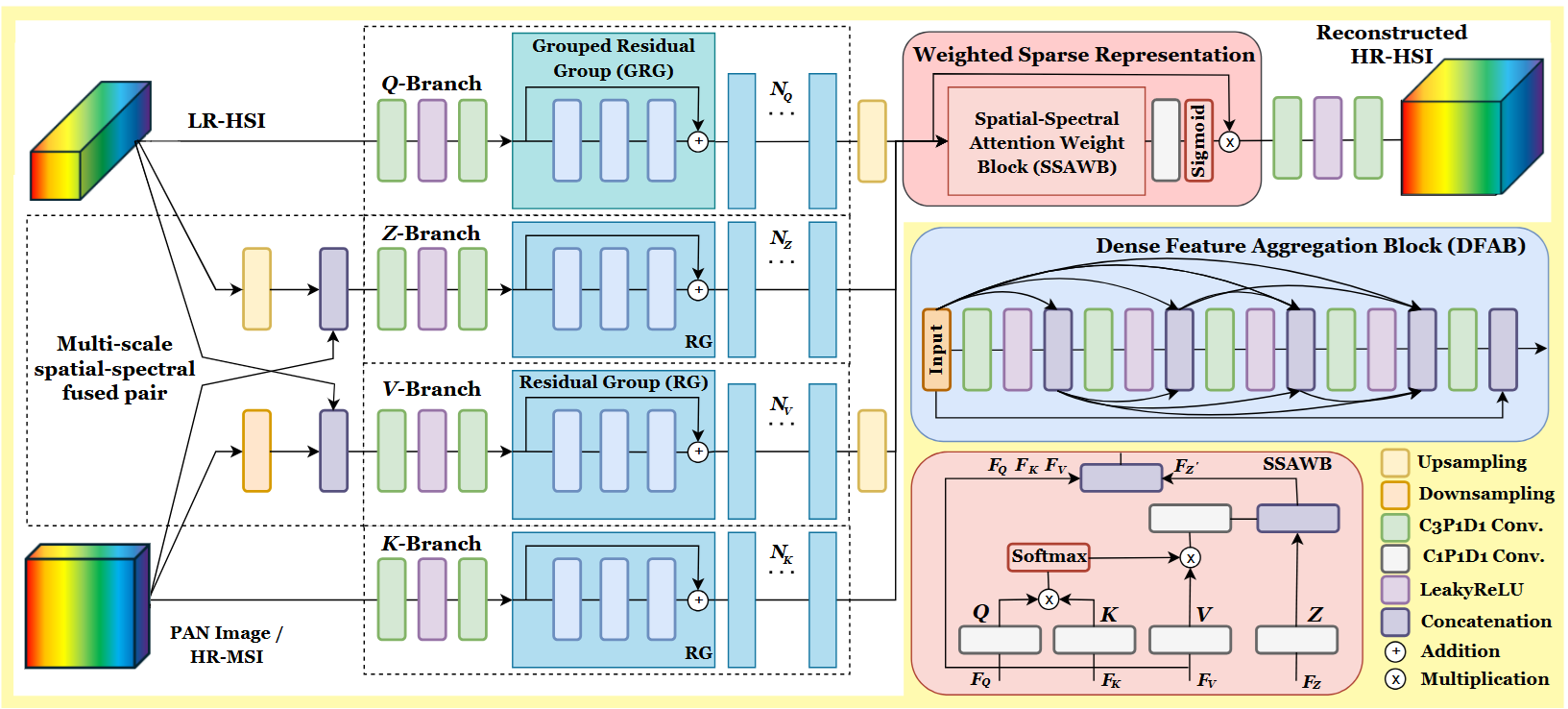

HSSDCT: Factorized Spatial-Spectral Correlation for Hyperspectral Image Fusion

Chia-Ming Lee, Yu-How He, Yu-Fan Lin, Jen-Wei Lee, Chih-Chung Hsu, Li-Wei Kang

Fuses low-resolution hyperspectral and high-resolution RGB images by factorizing spatial and spectral correlations separately, producing sharp, spectrally accurate results with reduced computational cost.

AuroraHSI: An End-to-End Hyperspectral Image Fusion Method for Degraded Imagery

Chia-Ming Lee, Cheng-Jun Kang, Ching-Heng Cheng, Yu-Fan Lin, Yi-Shiuan Chou, Chih-Chung Hsu, Fu-En Yang, Yu-Chiang Frank Wang

An end-to-end pipeline that simultaneously denoises and fuses degraded hyperspectral imagery, recovering both spatial sharpness and spectral fidelity even when the input is corrupted by multiple types of real-world noise.

S3RNet: Sparse Spatial-Spectral Representation with Hybrid Knowledge Distillation for Efficient Hyperspectral Image Pansharpening

Chia-Ming Lee, Yu-Fan Lin, Li-Wei Kang, Chih-Chung Hsu

Sharpens hyperspectral images efficiently by using sparse representations to capture the most informative spatial-spectral patterns, while knowledge distillation from a larger teacher model compensates for the reduced capacity.

Robust Hyperspectral Image Pansharpening via Sparse Spatial-Spectral Representation

Chia-Ming Lee, Yu-Fan Lin, Li-Wei Kang, Chih-Chung Hsu

Enhances the spatial resolution of hyperspectral images by learning sparse representations that capture the most salient spatial and spectral features, maintaining robustness against noise and misalignment.

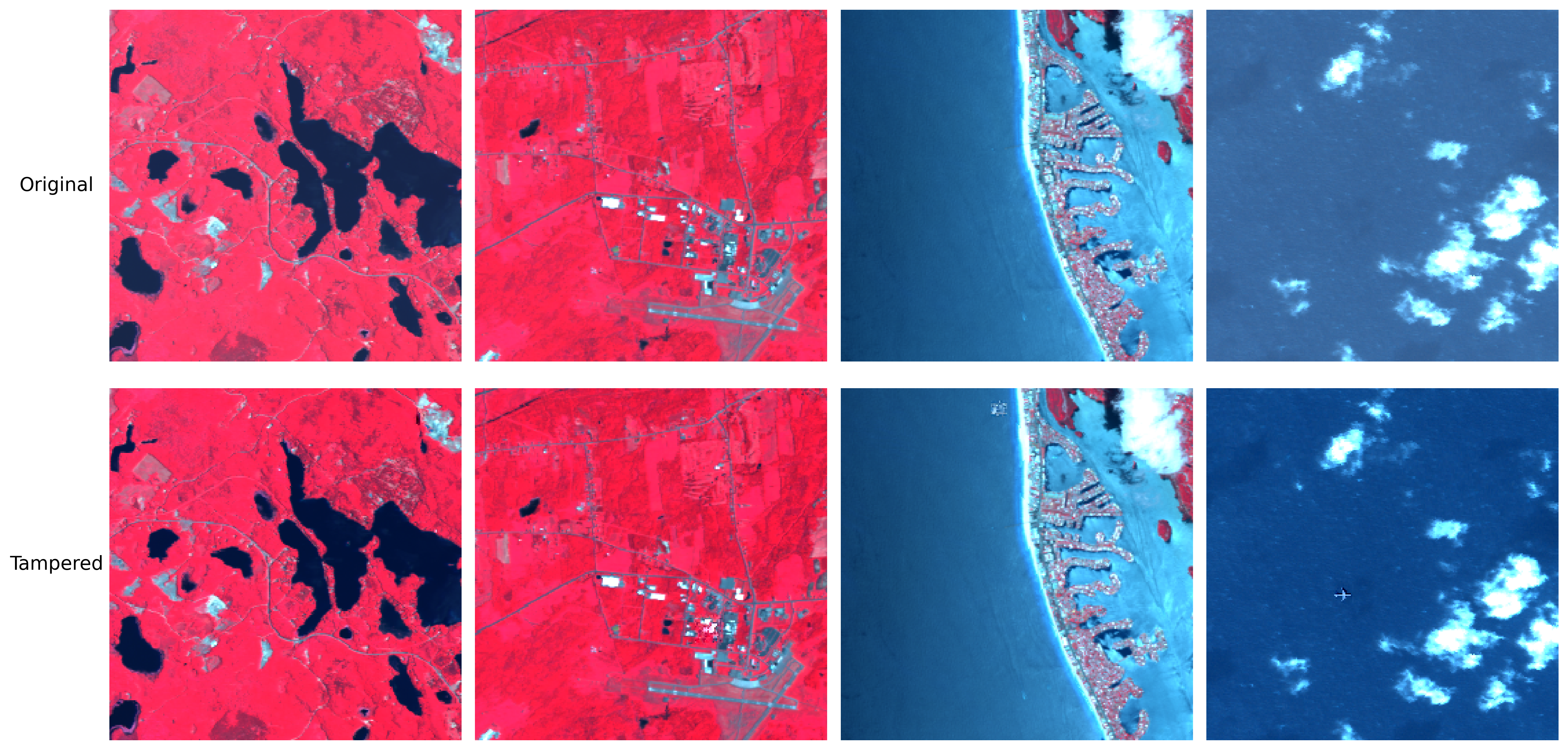

HyperForensics++: Toward Adversarial Perturbed and Object Replacement in Hyperspectral Imaging Dataset

Chih-Chung Hsu, Chia-Ming Lee, Yu-Fan Lin, Min-Zo Ko, En-Zhao Liu, Yi-Ching Cheng, Ming-Ching Chang

Introduces a benchmark dataset and detection framework for hyperspectral image forensics, targeting adversarial perturbations and object replacement attacks that are invisible in RGB but detectable across spectral bands.

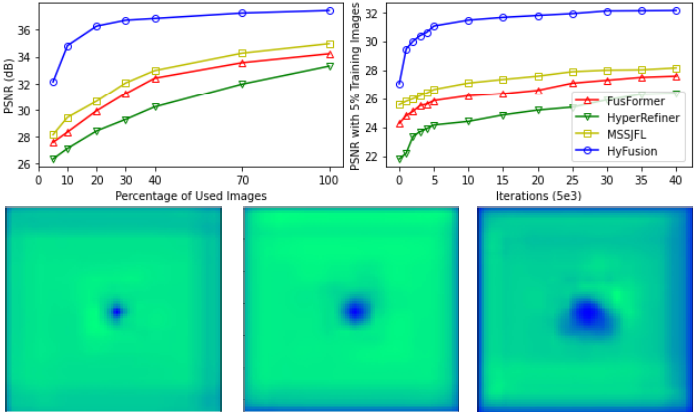

HyFusion: Enhanced Reception Field Transformer for Hyperspectral Image Fusion

Chia-Ming Lee, Yu-Fan Lin, Yu-Hao Ho, Chih-Chung Hsu, Li-Wei Kang

Improves hyperspectral image fusion by enlarging the transformer's receptive field, enabling the model to capture long-range spatial dependencies that are critical for preserving fine structural details in the fused output.

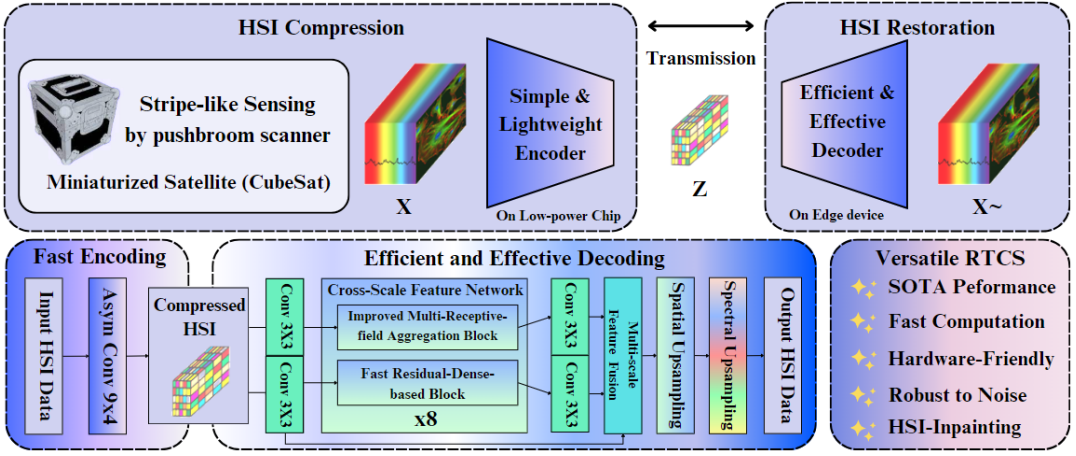

Real-Time Compressed Sensing for Joint Hyperspectral Image Transmission and Restoration for CubeSat

Chih-Chung Hsu, Chih-Yu Jian, Eng-Shen Tu, Chia-Ming Lee, Guan-Lin Chen

Enables tiny CubeSat satellites to transmit hyperspectral data in real time by compressing imagery on-board via compressed sensing and jointly restoring it on the ground, trading bandwidth for fidelity under strict power constraints.

ELSA: Exact Linear-Scan Attention for Fast and Memory-Light Vision Transformers

Chih-Chung Hsu, Xin-Di Ma, Wo-Ting Liao, Chia-Ming Lee

Speeds up vision transformers by replacing the standard quadratic attention with a hardware-friendly linear scan, achieving the same exact results at a fraction of the memory and compute cost — with no approximation involved.

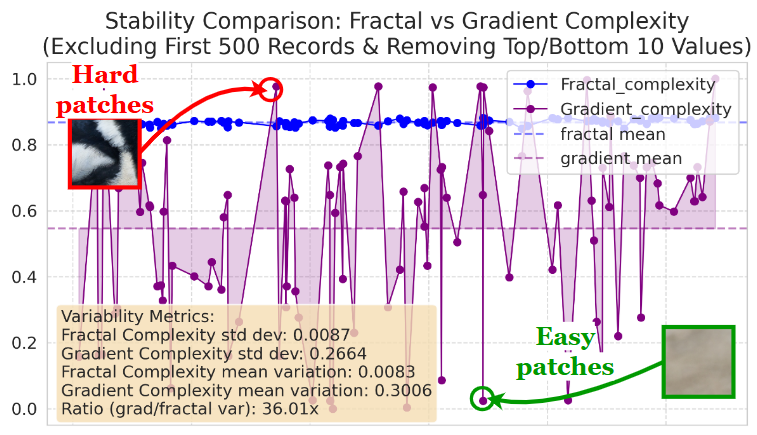

FracQuant: Fractal Complexity Assessment for Content-aware Image Super-resolution Network Quantization

Chia-Ming Lee, Yu-Fan Lin, Fu-En Yang, Yu-Chiang Frank Wang, Chih-Chung Hsu

Compresses super-resolution networks more intelligently by measuring the fractal complexity of each image region, allocating higher precision where detail matters and lower precision where the content is simple.

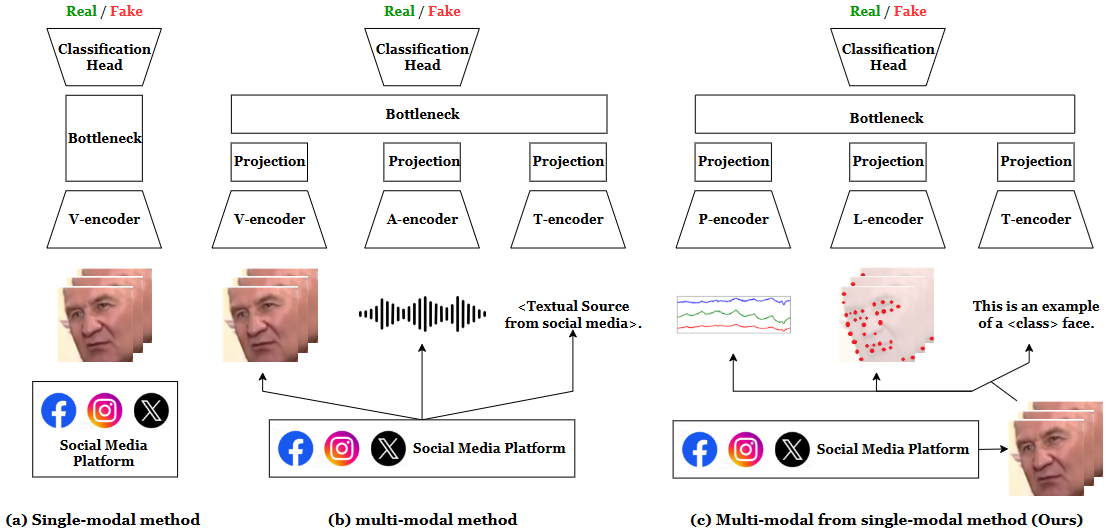

UMCL: Unimodal-generated Multimodal Contrastive Learning for Cross-compression-rate Deepfake Detection

Ching-Yi Lai, Chih-Yu Jian, Pei-Cheng Chuang, Chia-Ming Lee, Chih-Chung Hsu, Chiou-Ting Hsu, Chia-Wen Lin

Detects deepfakes robustly across different video compression levels by synthesizing multimodal training signals from a single modality, using contrastive learning to keep real and fake representations well-separated even when compression artifacts obscure subtle forgery traces.

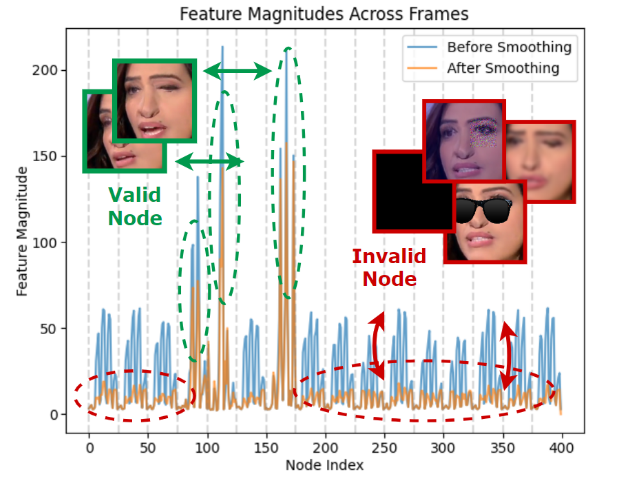

Towards Robust DeepFake Detection under Unstable Face Sequences: Adaptive Sparse Graph Embedding with Order-Free Representation and Explicit Laplacian Spectral Prior

Chih-Chung Hsu, Shao-Ning Chen, Mei-Hsuan Wu, Chia-Ming Lee, Yi-Fang Wang, Yi-Shiuan Chou

Detects deepfakes in low-quality or temporally inconsistent video sequences by modeling facial dynamics as sparse graphs, using order-free representations and spectral graph priors to stay robust against missing frames and heavy compression.

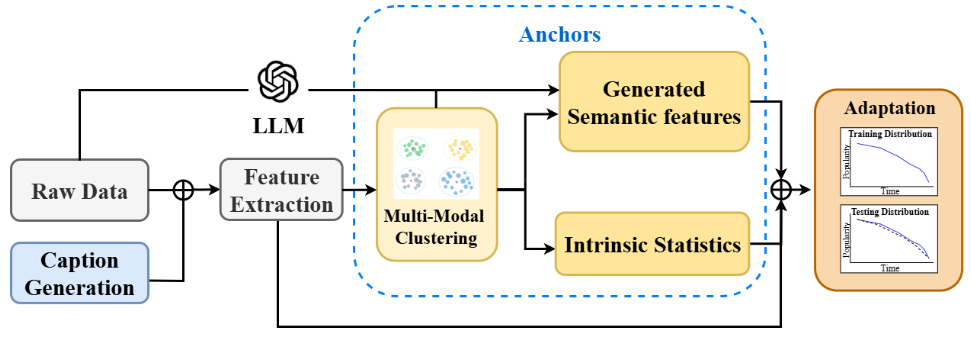

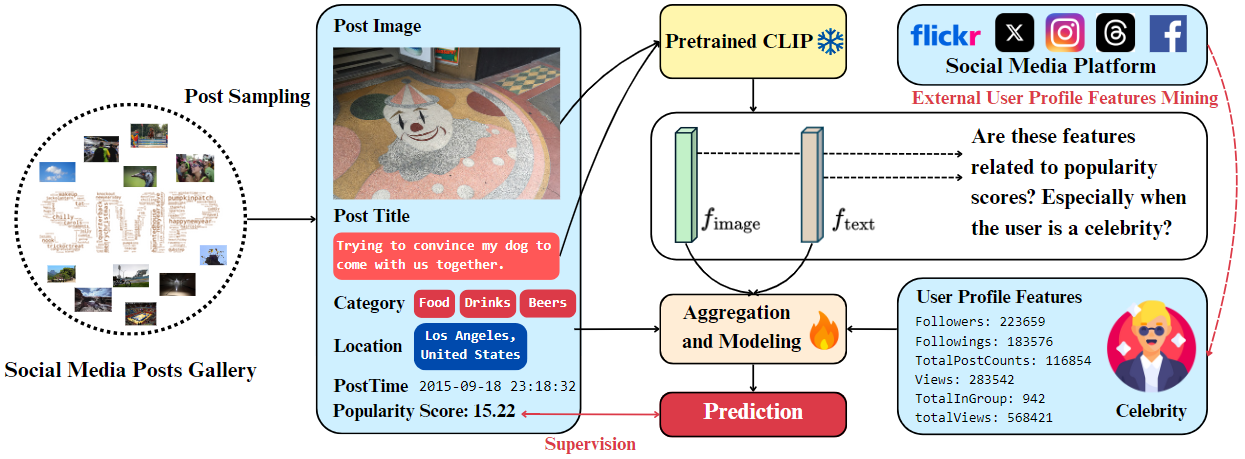

Anchoring Trends: Mitigating Social Media Popularity Prediction Drift via Feature Clustering and Expansion

Chia-Ming Lee, Bo-Cheng Qiu, Cheng-Jun Kang, Yi-Hsuan Wu, Jun-Lin Chen, Yu-Fan Lin, Yi-Shiuan Chou, Chih-Chung Hsu

Addresses prediction drift in social media popularity models by anchoring features to stable trend clusters and using LLM-guided expansion to enrich representations, keeping forecasts accurate as content trends shift over time.

Revisiting Vision-Language Features Adaptation and Inconsistency for Social Media Popularity Prediction

Chih-Chung Hsu, Chia-Ming Lee, Yu-Fan Lin, Yi-Shiuan Chou, Chi-Han Tsai

Predicts social media post popularity by identifying and resolving inconsistencies between visual and language features, using cross-modal adaptation to better capture what makes content resonate with audiences.

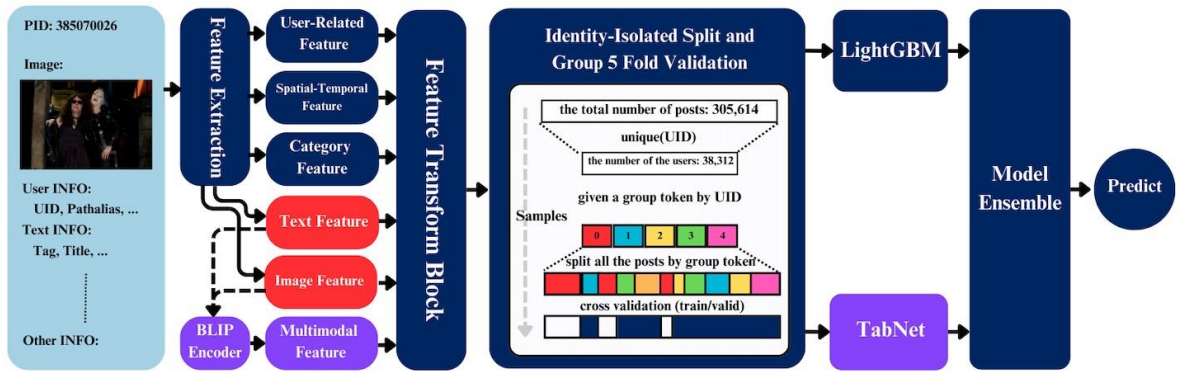

Gradient Boost Tree Network based on Extensive Feature Analysis for Popularity Prediction of Social Posts

Chih-Chung Hsu, Chia-Ming Lee, Xiu-Yu Hou, Chi-Han Tsai

Forecasts social post popularity by combining gradient boosted trees with an extensive set of handcrafted visual, textual, and temporal features, providing strong interpretable baselines for multimodal popularity prediction.

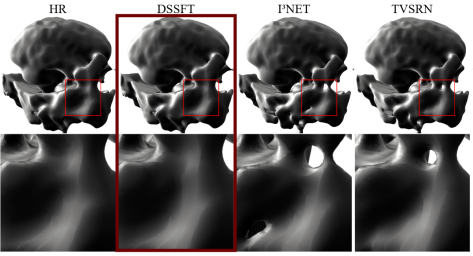

DSSFT: Dense Spatial-Slice Fusion Transformer for Medical Volumetric Super-Resolution

I-An Tsai, Chia-Ming Lee, Shen-Chieh Tai, Chun-Rong Huang, Chih-Chung Hsu

Upscales low-resolution 3D medical scans (e.g., MRI/CT) by densely fusing spatial and slice-wise features across the volume, recovering fine anatomical details that are critical for accurate clinical diagnosis.

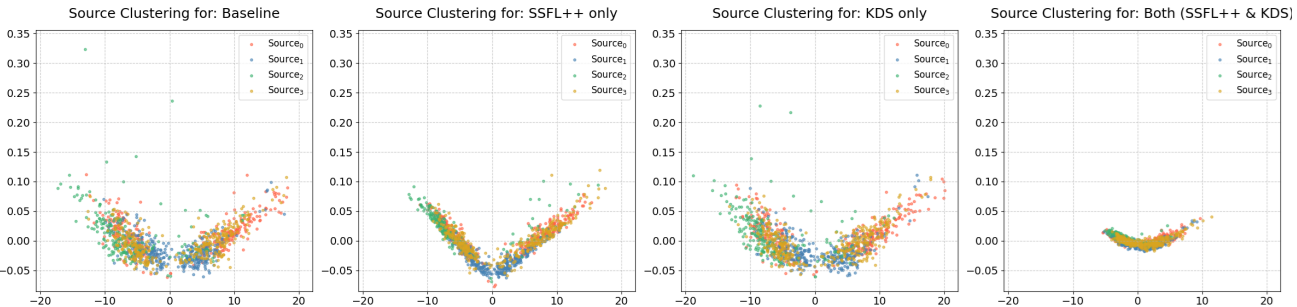

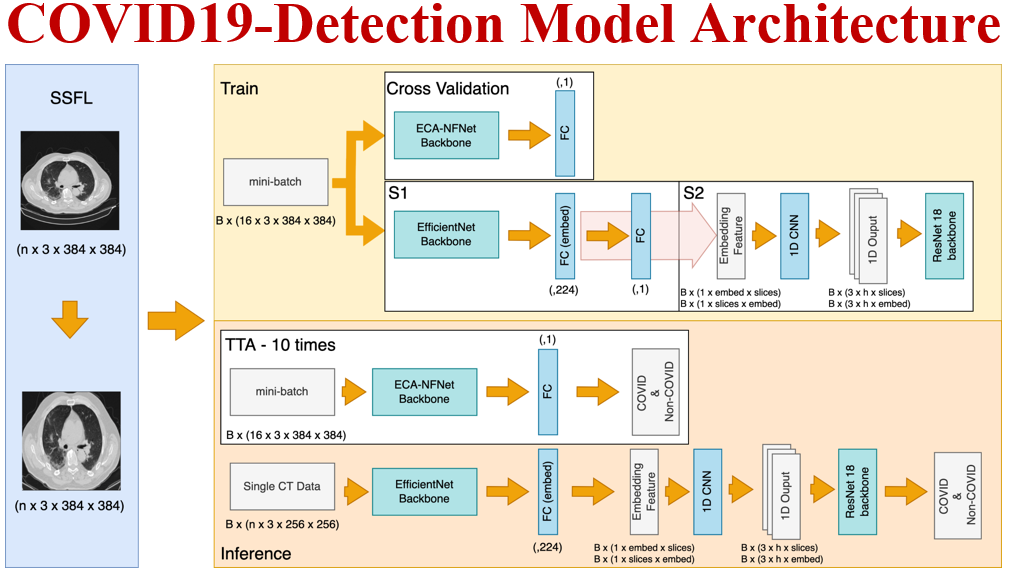

Taming Domain Shift in Multi-source CT-Scan Classification via Input-Space Standardization

Chia-Ming Lee, Bo-Cheng Qiu, Ting-Yao Chen, Ming-Han Sun, Fang-Ying Lin, Jung-Tse Tsai, I-An Tsai, Chih-Chung Hsu

Improves COVID-19 detection across CT scans from different hospitals by standardizing inputs at the image level before they enter the model, reducing domain gap without requiring access to target domain labels.

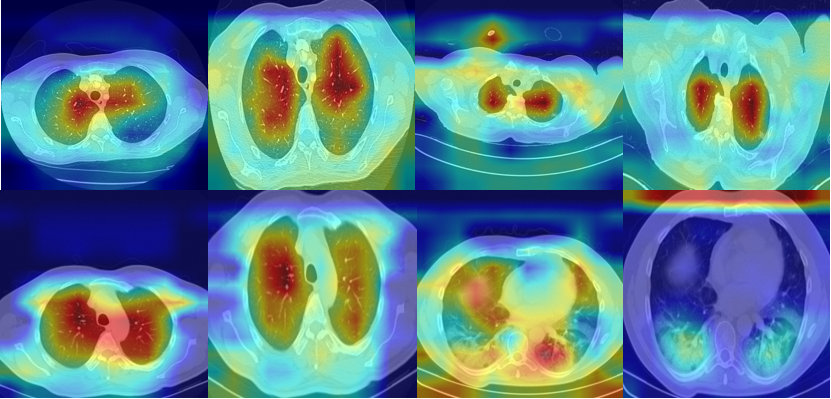

A Closer Look at Spatial-Slice Features Learning for COVID-19 Detection

Chih-Chung Hsu, Chia-Ming Lee, Yang Fan Chiang, Yi-Shiuan Chou, Chih-Yu Jiang, Shen-Chieh Tai, Chi-Han Tsai

Detects COVID-19 in CT scans by jointly learning spatial features within each slice and inter-slice features across the volume, using morphological analysis to focus attention on clinically relevant lung regions.

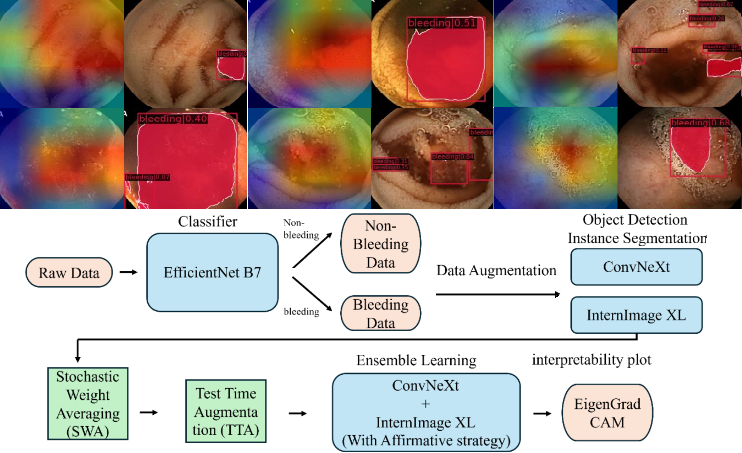

Divide and Conquer: Grounding a Bleeding Areas in Gastrointestinal Image with Two-Stage Model

Yu-Fan Lin, Bo-Cheng Qiu, Chia-Ming Lee, Chih-Chung Hsu

Locates bleeding regions in gastrointestinal endoscopy images using a two-stage pipeline that first detects candidate areas and then precisely segments them, improving sensitivity for small or subtle lesions.

Bag of Tricks of Hybrid Network for Covid-19 Detection of CT Scans

Chih-Chung Hsu, Chih-Yu Jian, Chia-Ming Lee, Chi-Han Tsai, Shen-Chieh Tai

Diagnoses COVID-19 from CT scans by combining CNN and transformer branches in a hybrid network, augmented with a collection of training and inference tricks (augmentation, test-time ensembling, etc.) to maximize detection accuracy.

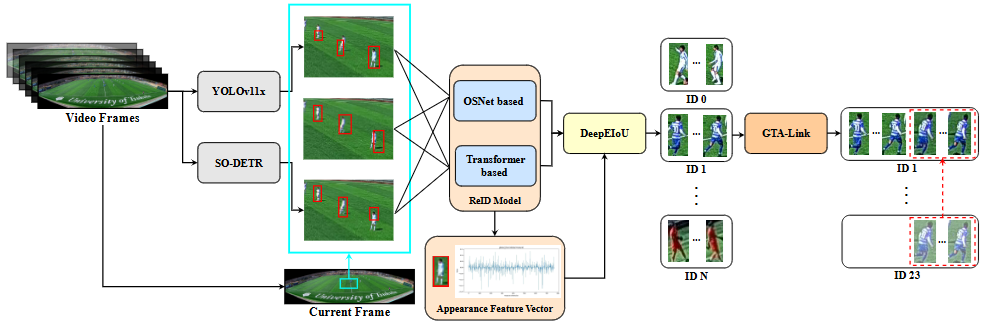

GTATrack: Winner Solution to SoccerTrack 2025 with Deep-EIoU and Global Tracklet Association

Rong-Lin Jian, Ming-Chi Luo, Cheng-Wei Huang, Chia-Ming Lee, Yu-Fan Lin, Chih-Chung Hsu

Tracks soccer players in fisheye video by combining an improved IoU-based detector with a global tracklet association strategy, handling severe occlusion and wide-angle distortion to achieve winning performance at SoccerTrack 2025.

OmniDet: Omnidirectional Object Detection via Fisheye Camera Adaptation

Chih-Chung Hsu, Wei-Hao Huang, Wen-Hai Tseng, Ming-Hsuan Wu, Ren-Jung Xu, Chia-Ming Lee

Adapts standard object detectors to handle the extreme radial distortion of fisheye cameras, enabling reliable 360° omnidirectional detection without requiring purpose-built hardware or full dataset re-annotation.

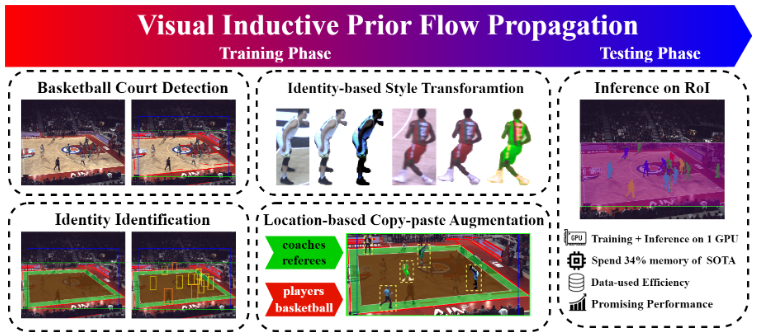

MISS: Memory-efficient Instance Segmentation Framework By Visual Inductive Priors Flow Propagation

Chih-Chung Hsu, Chia-Ming Lee

Performs instance segmentation with low memory overhead by propagating visual inductive priors across frames, enabling accurate object masking even under a few-shot setting without storing large feature maps.

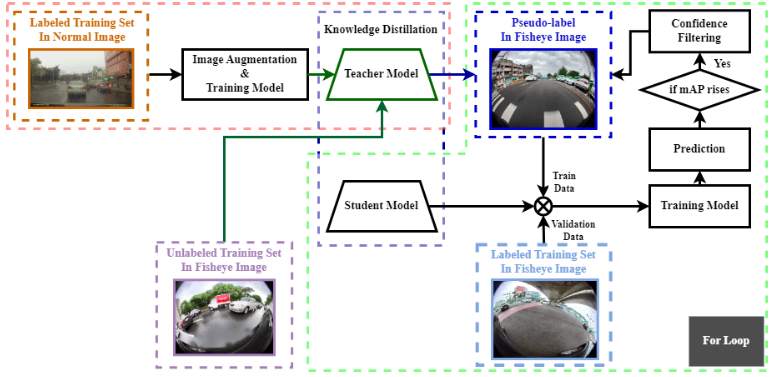

Adapting Object Detection to Fisheye Cameras: A Knowledge Distillation with Semi-Pseudo-Label Approach

Chih-Chung Hsu, Wen-Hai Tseng, Ming-Husan Wu, Chia-Ming Lee, Wei-Hao Huang

Adapts pretrained object detectors to fisheye cameras using a combination of knowledge distillation and semi-supervised pseudo-labels, reducing the need for expensive fisheye-specific annotations.

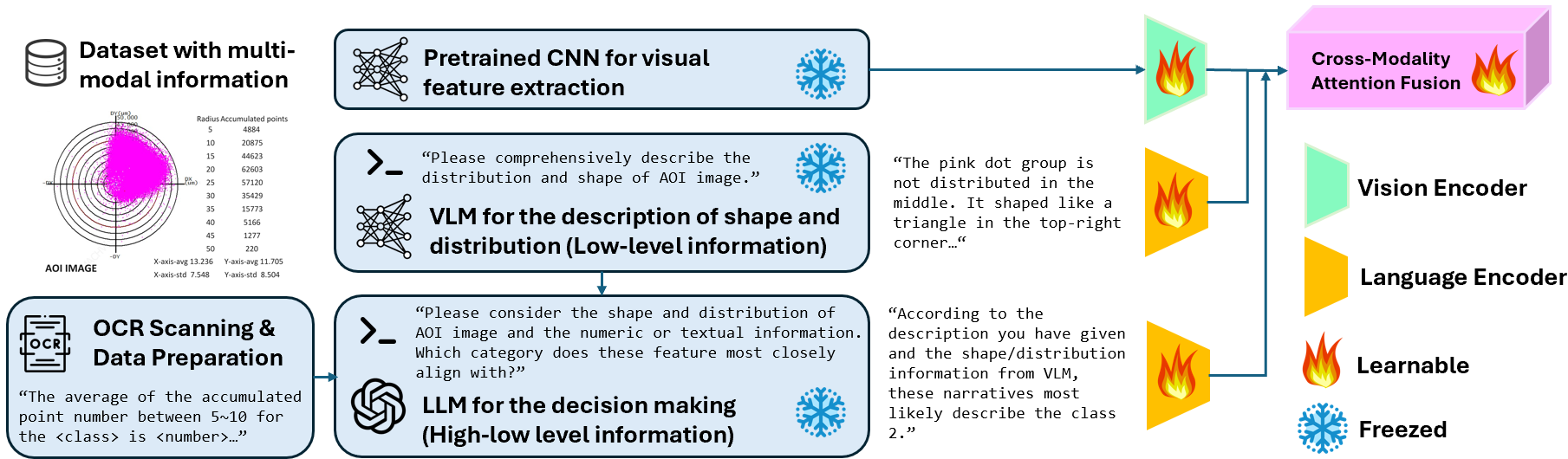

OCR is All you need: Importing Multi-Modality into Image-based Defect Classification System

Chih-Chung Hsu, Chia-Ming Lee, Po-Tsun Yu, Chun-Hung Sun, Kuang-Ming Wu

Enriches image-based defect classification by extracting text from product labels via OCR and fusing it with visual features, allowing the model to leverage both appearance and specification information for more accurate industrial defect detection.