Shadow removal under diverse lighting requires disentangling illumination from intrinsic reflectance— a challenge when physical priors are misaligned.

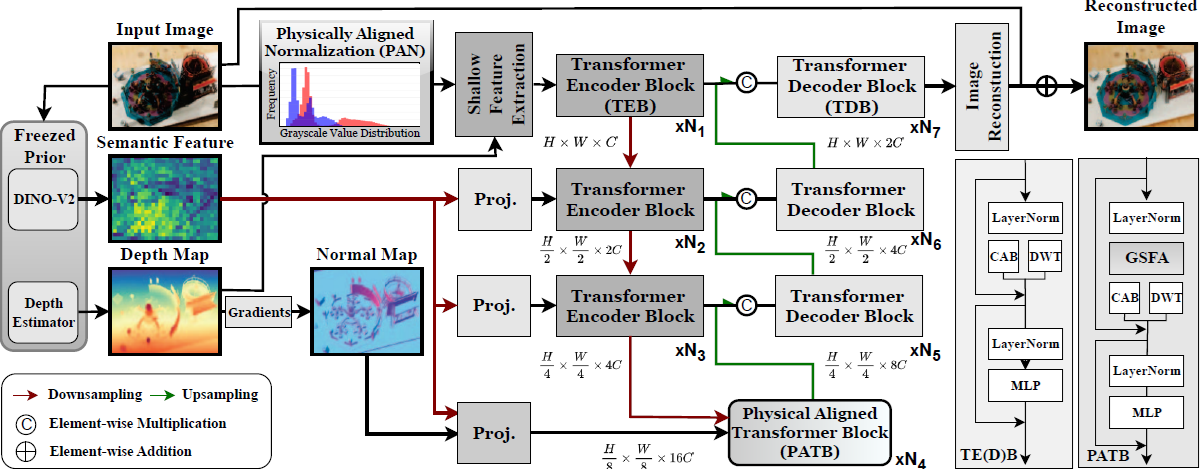

We propose PhaSR with dual-level prior alignment: (1) PAN performs parameter-free illumination correction via Gray-world normalization and log-domain Retinex decomposition, suppressing chromatic bias. (2) GSRA extends differential attention to harmonize depth-derived geometry with DINO-v2 semantics, resolving modal conflicts across illumination conditions.

Experiments demonstrate competitive performance with lower complexity, generalizing to ambient lighting where traditional methods fail.

A multi-scale Transformer encoder-decoder integrates frozen DINO-v2 semantic features and DepthAnything-v2 geometric priors via GSRA's cross-modal differential attention ($\mathbf{A}_\text{rect} = \mathbf{A}_\text{sem} - \lambda \cdot \mathbf{A}_\text{geo}$).

⚡ Geometric-Semantic Rectification Attention (GSRA)

Real-world scenes carry two physically distinct signals that respond to illumination very differently. Geometric priors (depth, surface normals from DepthAnything-v2) are sharp at shadow boundaries but noisy in uniformly lit regions. Semantic embeddings (DINO-v2) stay stable across lighting changes—a red apple is always semantically a red apple—but are spatially coarse. GSRA harmonizes these two modalities through cross-modal differential attention:

The subtraction suppresses geometric noise in uniformly lit regions while preserving geometric precision at true illumination boundaries—producing features that balance local edge sharpness with global material consistency.

🎬 Physically Aligned Normalization (PAN) — Distribution Visualization

PAN is a parameter-free preprocessing module that corrects illumination before any learned feature extraction. The animation below illustrates how PAN decomposes the input pixel intensity distribution, identifies shadow pixels as outliers on the distribution tail, and recombines the components so that those outliers are pulled back into the normal range— effectively suppressing shadows without a single trainable weight.

Each panel shows the pixel intensity histogram at a different stage of PAN. Red bars represent shadow-region pixels clustered at the low-brightness end; blue bars represent the normal (non-shadow) distribution. After PAN, the red distribution shifts toward brighter values—shadows become less dark— and the two distributions converge into a more uniform output. The darker the residue map (GT − Î), the better the correction.

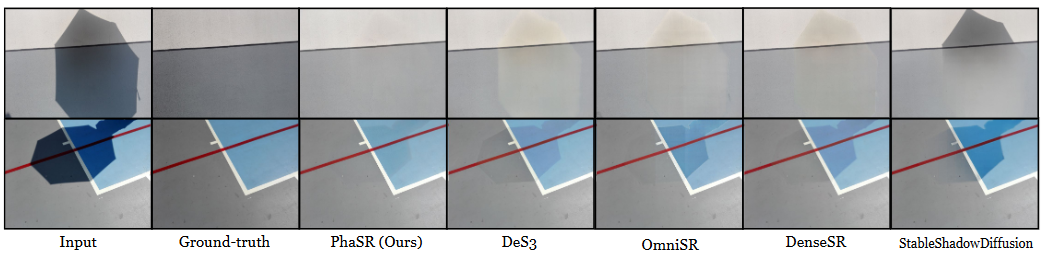

PhaSR (Ours)

DenseSR

ShadowRefiner

StableShadowDiffusion

PhaSR (Ours)

DenseSR

ShadowRefiner

StableShadowDiffusion

PhaSR (Ours)

DenseSR

OmniSR

StableShadowDiffusion

PhaSR (Ours)

DenseSR

OmniSR

StableShadowDiffusion

PhaSR (Ours)

DenseSR

OmniSR

StableShadowDiffusion

PhaSR (Ours)

DenseSR

OmniSR

StableShadowDiffusion

@inproceedings{lee2026phasr,

title = {PhaSR: Generalized Image Shadow Removal with Physically Aligned Priors},

author = {Lee, Chia-Ming and Lin, Yu-Fan and Hsiao, Yu-Jou and Jiang, Jin-Hui and Liu, Yu-Lun and Hsu, Chih-Chung},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2026},

}